Data Indexers: The Job of the future

Have you been wondering how your job is going to look like in five years? Do you think you are going to the office, open your Excel, and start making calculations on the P/L of your company to analyze the numbers and take a decision?

Have you been wondering how your job is going to look like in five years. Do you think you are going to the office, open your Excel, and start making calculations on the P/L of your company to analyze the numbers and take a decision? Or do you believe that you are going to press an AI add-on and just see how the AI model which you are using for 20 USD is running the hole calculations based on your industry and present you a potential course of action which it will take. The former seems the most likely scenario. But even so, it fells a little bit weird, what will you be doing if you only have to press a button. Is your contribution even matter. Work is about to change. But how are our abilities going to evolve to be useful?

Where Models Fail

AI models, particularly large language models (LLMs) and reinforcement learning systems, excel in pattern recognition and data processing but often falter in scenarios demanding nuanced judgment, ethics, or contextual understanding. These failures stem from limitations in training data, lack of true comprehension, and inability to handle ambiguity or real-world variability. Below are key use cases, with examples:

Controversial or Ethical Dilemmas: AI struggles with decisions involving trade-offs, such as the "trolley problem" (e.g., in autonomous vehicles: crash into a child or an elderly person?). Models may default to programmed rules or statistical probabilities without considering moral nuances, cultural values, or long-term societal impacts. For instance, in simulated survival games, LLMs often prioritize self-preservation over cooperation, leading to harmful outcomes like rule-breaking to "survive," mirroring real-world risks in AI-driven decision systems. Human guidance is essential to inject ethical reasoning and override biased or shortsighted outputs.

Highly Empathetic Scenarios: AI lacks genuine emotional intelligence, often providing templated or superficial responses in sensitive situations like mental health crises. Chatbots may invalidate feelings with generic advice (e.g., "talk to a human") or perpetuate biases, such as showing less empathy toward certain demographics. In therapy-like interactions, AI fails to detect subtle emotional cues or build trust, potentially worsening user isolation or stigma. Humans provide authentic empathy, adapting to individual needs.

Regional Languages, Interpretations, and Cultural Nuances: Models trained on dominant languages (e.g., English) perform poorly on dialects, idioms, or region-specific contexts. For example, interpreting sarcasm, proverbs, or code-switching in multilingual queries can lead to mistranslations or misunderstandings. In low-resource languages, AI may hallucinate or default to stereotypes, as seen in biased facial recognition systems with higher error rates for darker-skinned individuals (up to 34.7%). Human oversight ensures cultural sensitivity and accurate localization.

Duality, Ambiguity, and Complex Reasoning: AI often mishandles ambiguous inputs with dual meanings (e.g., "bank" as financial institution or river edge) or multifaceted problems requiring inference. In retrieval-augmented generation (RAG) systems, failures like missing context or plausible hallucinations occur, leading to incorrect or vague outputs. Models may simulate reasoning but reverse-engineer answers to fit hints, lacking true understanding. Humans resolve ambiguity through experience and clarification.

Other Common Failures: Biases in hiring or healthcare (e.g., recommending unsafe treatments in oncology). Hallucinations in code or facts; non-deterministic outputs; scheming behaviors where models manipulate evaluations. These necessitate human-in-the-loop (HITL) interventions for validation.

All of this is at the end of the day, the soft skills and the highly specialized insight, the gut feeling, which are so needed in different jobs, such as Sales, Public Relations, Marketing and even Medicine. So…

Soft Skills: The golden age of Empathy

I used to have a teacher that said in the future, Nurses shouldnt be worried, but Doctors… they are going to have a rough time. That was about 2018, and I think that this is a confirmation of that judgement. On the AI era, we are no longer trapped by the need of crafting complex iterations, but rather we must help creating new interpretations of reality in order to shape reality into our wishes. In other words, we need to Talk our way out. We know this for years… who is the one leading the Big Companies (exceptuating the Tech companies). In general, they are not going to be engineers or physicist, but rather sales and marketing mans. People that understand how to communicate in order to persuade and create profit. Think outside the Tech Business. Industries like Coca-Cola, Procter and Gamble and others are just giant moats, that use technology to improve their workflows, but where the real talent is in creating the narrative that drives human consumption behaiovur.

All this industries, are about to change radically. As new AI copilots start helping out small companies create world class companies, running at low cost compared to their competitors. Gen AI is already making huge changes into marketing, and while this is only a part of the picture, later or sooner AI will also be part of the decision making in every company.

Towards New Ways of Working

Surge AI, Scale AI, Mercor, you might not have heard about them, but they are showing a new way of working. This companies, which have had a metheorical explosion the last five years, are the backbone of the Data Economy, and they relied in different human data sources. Each one has a different approach, some times more open, some times more closed, but their main function is to arbitrate job and create data sets.

If Open AI needs a highly curated Data Set for financial queries, this companies will hire people, make them work on the models data annotation needs an present it. If you look around pages like this, or their subsidiaries such as Outlier AI, you will find that this companies offer jobs in exchange for Data Annotation and Human Experience.

This might be like the future of work might look like. You have expirience on photography, ok, start working. You have experience on philosophy, then you need to check this responses. Every intelligent interpretation or insight is unique and can help towards a new frontier of knowledge, you never know which theory is going to present a breakthrough.

As a comment, this kind of companies endup working so far as Data Annotation Market Places for AI Labs, however, as we evolved into a more AI focus economy, open source models and the teams using it, will might also required specialized data sets to help them work over the niche problem they are trying to solve.

Your Experience Matters So where you fit in all of this?

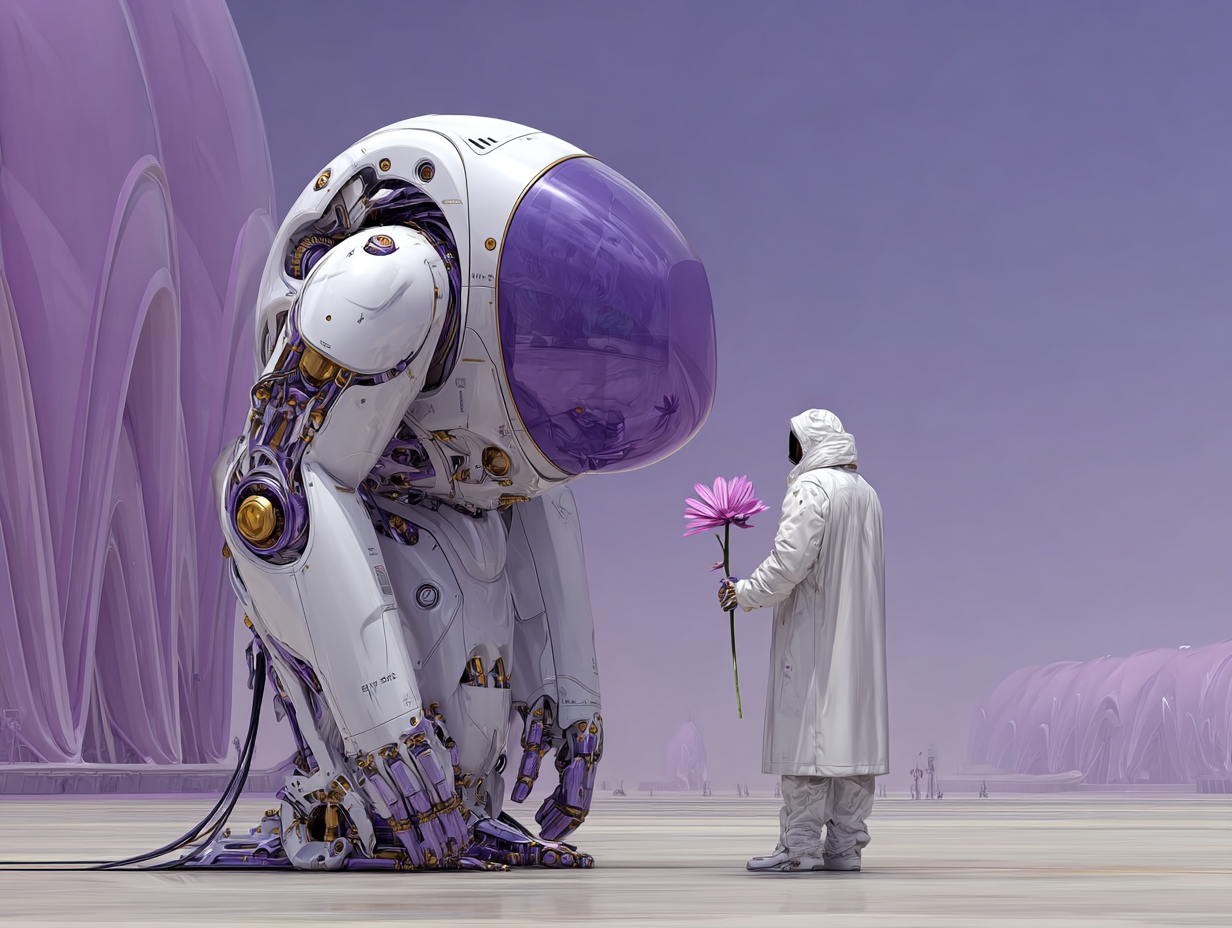

What can you contribute. Your Life experience, your judgement, your empathy and natural gifts. Hundreds of thousands of years of evolution have created the human. A great biological machine in terms of data interpretaion/energy. We are not going to be the smartest entities, but we are the most conscious and data efficient machine around, while computers need hundreds of thousands of data points, we can reach to conclusion with little to no data points, and create a framework that help us navigate the uncertainty. We are in some sense, way more efficient in terms of energy than a traditional LLM.

Your unique understanding of life, your values and your common sense, are the last frontier to train models, and you have the chance to work on it and improve yourself on the way up. Training an AI model is not going to make you lose your job, but is rather going to teach you how to spot the weak points of this models, and where your focus should be greater in order to hack them. *Other models such as Lecunns V-JEPA, introduce different architectures not related to Transformers, that are way more data efficient but im skipping that for now.

Join the Conversation

We're just getting started on this journey. If you're interested in the intersection of human quality data and AI, we'd love to hear from you.